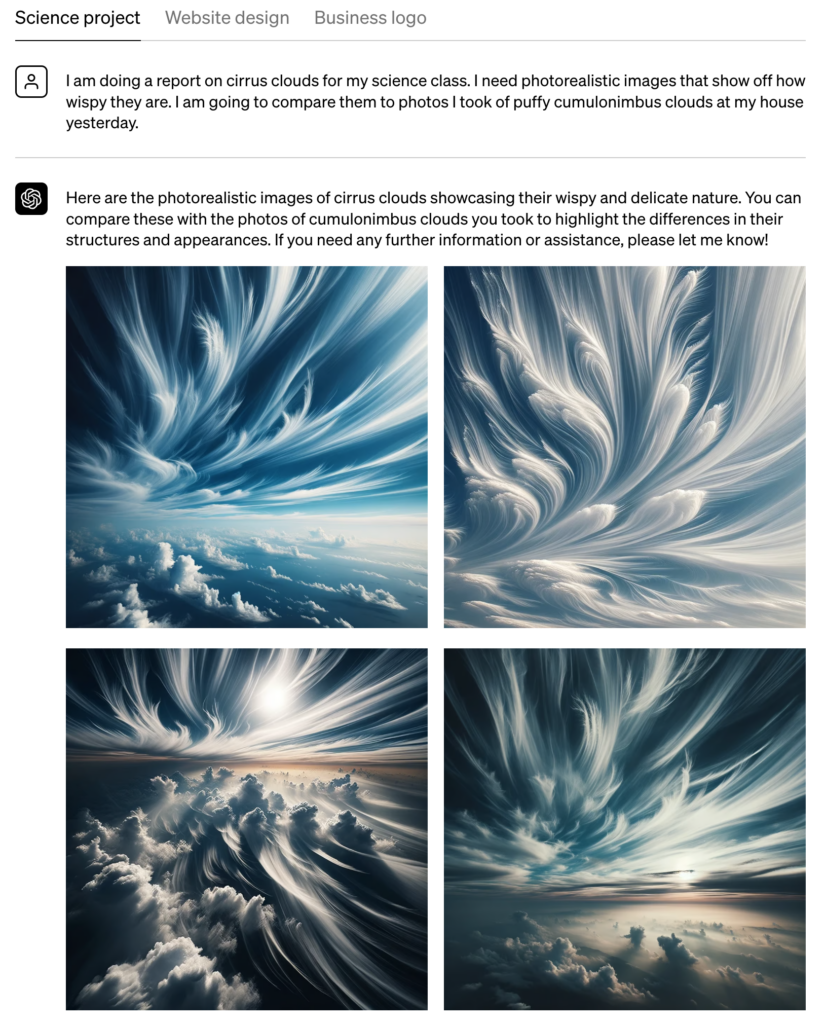

OpenAI has announced the integration of its advanced image-generating model, DALL·E 3, into ChatGPT Plus and Enterprise. This move aims to offer users a more interactive and visually engaging experience by generating high-quality images based on textual prompts. The integration is designed to benefit a wide range of applications, from content creation to business presentations.

The company has implemented a multi-layered safety system to restrict DALL·E 3 from producing potentially harmful content, such as violent or adult imagery. This safety system scrutinizes both the user prompts and the generated images before they are shown to users. OpenAI has also collaborated with early users and expert evaluators to identify and rectify any shortcomings in its safety mechanisms. This process has helped the company to recognize edge cases where the model might generate inappropriate content, like sexually explicit images, and to fine-tune its ability to produce misleading visuals.

According to OpenAI’s blog post, in preparation for the wide deployment of DALL·E 3, OpenAI has taken measures to reduce the likelihood of the model generating content that mimics the style of living artists or portrays public figures. The company has also worked on improving the demographic representation in the images generated by the model. For more details on the preparations for DALL·E 3’s deployment, OpenAI refers users to the DALL·E 3 system card.

OpenAI emphasizes the importance of user feedback for continuous improvement. Users of ChatGPT can report any unsafe or inaccurate outputs by using a flag icon, which will inform the company’s research team. OpenAI believes that listening to a broad and diverse user base is crucial for responsible AI development and deployment.

The company is also in the early stages of evaluating a new internal tool known as a “provenance classifier.” This tool is designed to identify whether an image has been generated by DALL·E 3. Preliminary internal tests show that the classifier is over 99% accurate in identifying unmodified images generated by DALL·E 3, and retains over 95% accuracy even when the image has undergone common modifications like cropping or resizing. While the classifier is promising, it is not yet definitive in its conclusions. OpenAI expects this tool to be part of a broader set of techniques aimed at helping people understand if audio or visual content is AI-generated.

OpenAI anticipates that the provenance classifier will be a valuable asset in the ongoing challenge of determining the origins of AI-generated content. This is a challenge that the company believes will require collaboration across various stakeholders in the AI industry.

Featured Image via Unsplash

cialis interactions

cialis interactions

what happens if a woman takes cialis

what happens if a woman takes cialis

order cialis at online pharmacy

order cialis at online pharmacy

price of sildenafil tablets

price of sildenafil tablets

buy cheap viagra online australia

buy cheap viagra online australia

buy viagra without a prescription

buy viagra without a prescription

viagra soft canada

viagra soft canada

buying viagra from canada

buying viagra from canada

sildenafil 100mg tablets buy online

sildenafil 100mg tablets buy online

cialis from india online pharmacy

cialis from india online pharmacy

buy cipla tadalafil

buy cipla tadalafil

cialis reviews

cialis reviews

cialis no rx

cialis no rx

gabapentin klonopin

gabapentin klonopin

metronidazole gentamicin

metronidazole gentamicin

bactrim ethanol

bactrim ethanol

how to avoid weight gain on pregabalin

how to avoid weight gain on pregabalin

tamoxifen gebärmutterentfernung

tamoxifen gebärmutterentfernung

valtrex menstruation

valtrex menstruation

lisinopril+missed+dose+side effects

lisinopril+missed+dose+side effects

lasix bradycardia

lasix bradycardia

metformin hyperinsulinismus

metformin hyperinsulinismus

rybelsus type 1 diabetes

rybelsus type 1 diabetes

rybelsus for prediabetes

rybelsus for prediabetes

jessica simpson semaglutide

jessica simpson semaglutide

will escitalopram show on a drug test

will escitalopram show on a drug test

can cephalexin treat std

can cephalexin treat std

duloxetine for opiate withdrawal

duloxetine for opiate withdrawal

zoloft cost

zoloft cost

metronidazole vre

metronidazole vre

lexapro and anxiety

lexapro and anxiety

cymbalta and wellbutrin combo

cymbalta and wellbutrin combo

how long does fluoxetine take to work for anxiety

how long does fluoxetine take to work for anxiety

cheap viagra wholesale

cheap viagra wholesale

hydrocodone gabapentin

hydrocodone gabapentin

amoxicillin-clavulanate side effects

amoxicillin-clavulanate side effects

cephalexin 500 mg and alcohol

cephalexin 500 mg and alcohol

what does ciprofloxacin hcl 500mg treat

what does ciprofloxacin hcl 500mg treat

bactrim ds dose for adults

bactrim ds dose for adults

can you buy bactrim over the counter

can you buy bactrim over the counter

cozaar side effects anxiety

cozaar side effects anxiety

difference between augmentin and amoxicillin

difference between augmentin and amoxicillin

half life of depakote

half life of depakote

ezetimibe schering plough

ezetimibe schering plough

flexeril and naproxen

flexeril and naproxen

effexor side effects sexually

effexor side effects sexually

diltiazem anal fissure

diltiazem anal fissure

contrave interactions

contrave interactions

how long does it take for citalopram to get out of your system

how long does it take for citalopram to get out of your system

flomax dosage for adults

flomax dosage for adults

buy ddavp drops

buy ddavp drops

diclofenac sodium topical

diclofenac sodium topical

amitriptyline dose for pain

amitriptyline dose for pain

can you give dogs aspirin

can you give dogs aspirin

allopurinol kidney side effects

allopurinol kidney side effects

what are the side effects of aripiprazole

what are the side effects of aripiprazole

augmentin for sinus infection

augmentin for sinus infection

how long does it take celexa to work

how long does it take celexa to work

celebrex weight loss

celebrex weight loss

buspar drug

buspar drug

gabapentin and bupropion

gabapentin and bupropion

gaia ashwagandha

gaia ashwagandha

celecoxib and sulfa allergy

celecoxib and sulfa allergy

best time of day to take abilify

best time of day to take abilify

is remeron addictive

is remeron addictive

ir spectra of repaglinide

ir spectra of repaglinide

acarbose hepatotoxicity

acarbose hepatotoxicity

how long before protonix starts working

how long before protonix starts working

semaglutide dosing chart

semaglutide dosing chart

will robaxin get you high

will robaxin get you high

amoxicillin lactose

amoxicillin lactose

is tamsulosin a diuretic

is tamsulosin a diuretic

tizanidine 2 mg tablet

tizanidine 2 mg tablet

venlafaxine package insert

venlafaxine package insert

caremark synthroid

caremark synthroid

voltaren price

voltaren price

cost of stromectol

cost of stromectol

what class of drug is spironolactone

what class of drug is spironolactone