According to Stability AI, a leading name in open-source generative AI, they have recently launched Stable Audio. This new architecture for audio generation is part of broader advancements in diffusion-based generative models, which Stability AI claims have significantly improved the quality and control of generated media like images, videos, and audio.

In a blog post published on 13 September 2023, Stability AI points out that traditional audio diffusion models typically produce fixed-size outputs, such as 30-second audio clips. This becomes a challenge when the goal is to generate audio of varying lengths, like full-length songs. Stability AI notes that these models often train on random segments of longer audio files, leading to disjointed sections of a song when generated.

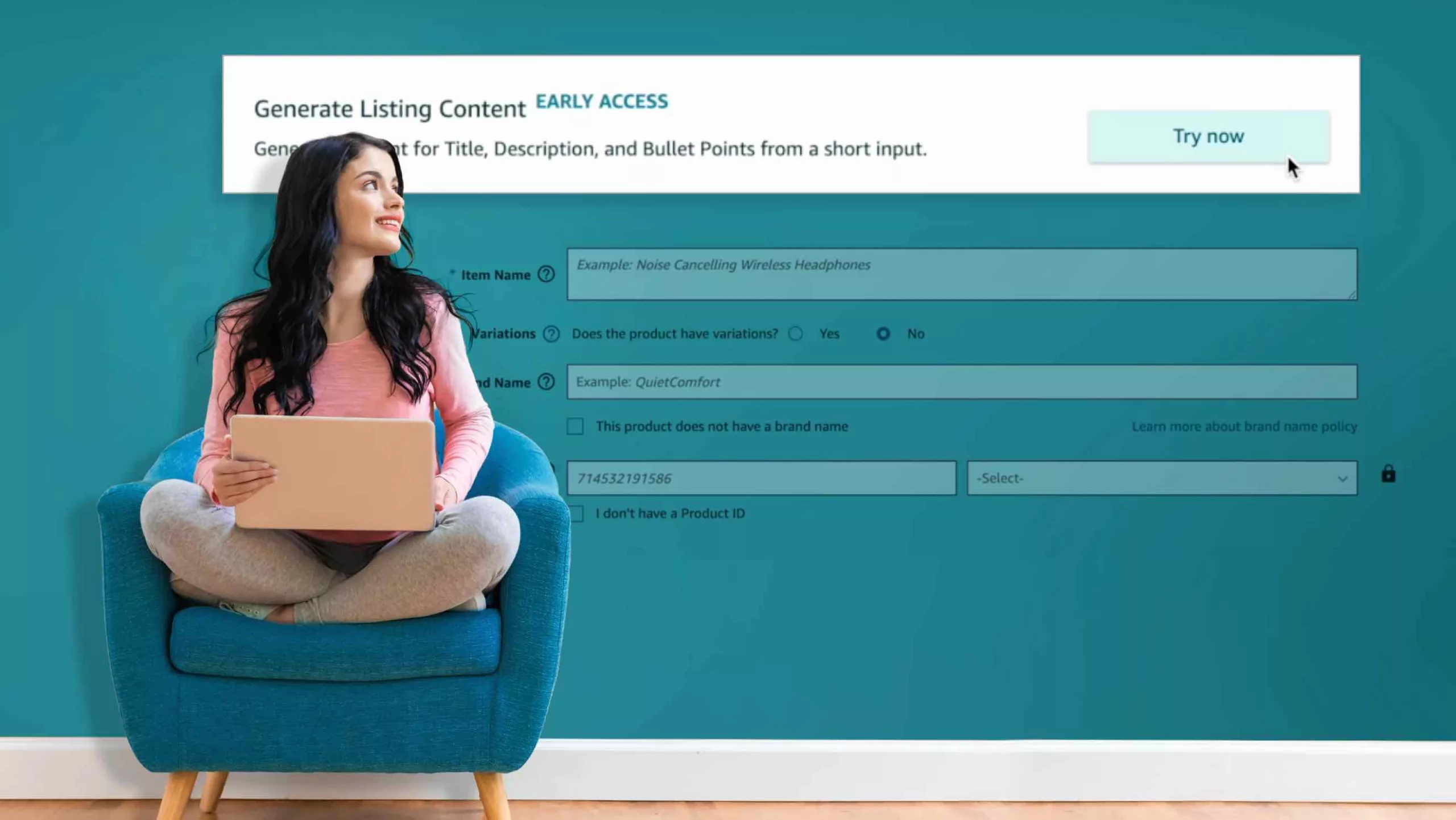

Stability AI introduces Stable Audio as a solution to these challenges. The company states that this new architecture incorporates text metadata, audio file duration, and start time as conditioning variables, allowing for more control over both the content and length of the generated audio.

Stability AI highlights the rapid inference time of Stable Audio as one of its standout features. According to the company, the model operates on a highly compressed latent representation of audio, enabling it to render 95 seconds of stereo audio at a 44.1 kHz sample rate in less than a second when run on an NVIDIA A100 GPU.

Stable Audio employs a multi-part architecture similar to their Stable Diffusion model. It uses a Variational Autoencoder (VAE) for compressing stereo audio, a text encoder based on the CLAP model for text prompts, and a U-Net-based conditioned diffusion model. Stability AI emphasizes that the VAE allows for faster generation and training, while the text encoder aids in conditioning the model on text prompts.

For training Stable Audio, Stability AI reveals that they used a dataset of over 800,000 audio files, including music, sound effects, and single-instrument stems. This dataset was acquired through a partnership with stock music provider AudioSparx and totals more than 19,500 hours of audio.

Stability AI’s generative audio research lab, Harmonai, says it is committed to refining Stable Audio’s architecture and training procedures. The team is focused on enhancing output quality, controllability, inference speed, and output length. The company also indicates that upcoming releases will include open-source models based on Stable Audio.

Featured Image via Midjourney